Developers don’t usually wake up thinking about rankings. They think about deployments, performance budgets, clean code, and release timelines. Yet somewhere down the line, someone from marketing or the SEO team says the site isn’t being indexed properly, pages aren’t appearing fast enough, or organic growth has stalled. Suddenly, SEO becomes a developer problem — and often, a frustrating one.

The reality is simple: search engines experience websites differently than users do. A site that looks perfect in the browser can still be difficult to crawl, slow to render, or unclear in structure for bots. That gap between user experience and crawlability is where technical decisions quietly shape visibility. And when developers understand those fundamentals early, things work better. Faster indexing, fewer surprises after deployment, and fewer urgent fixes later on.

This guide takes a practical approach to technical SEO checklist thinking. No abstract theory, no vague marketing advice. Instead, it focuses on the technical layers developers actually control: rendering, site structure, indexing signals, deployment workflows, and performance choices that influence search engines directly. Whether you’re working on a traditional CMS, a modern JavaScript framework, or a distributed web app, technical SEO is mostly about predictable engineering decisions.

And here’s the thing that often gets overlooked — improving SEO fundamentals usually improves overall product quality too. Clean architecture benefits maintainability. Faster load times improve user satisfaction. Clear URL structures reduce confusion for everyone.

You don’t need to become an SEO expert to build search-friendly products. You just need to understand how crawlers interpret your work. This article bridges that gap, helping developers and in-house SEO teams collaborate without friction. Think of it as a practical handbook for building sites that search engines can discover quickly, interpret accurately, and index efficiently.

Let’s start with the layer that controls everything else: crawlability.

Crawlability Basics: Robots, Sitemaps, And Canonical Rules

Before search engines can rank anything, they must crawl it. Sounds obvious, yet many indexing problems begin with misconfigured basics. A solid technical SEO for developers mindset starts by treating crawlability like infrastructure rather than an afterthought.

Robots.txt should guide crawlers, not block essential resources. Blocking JavaScript or CSS files accidentally can prevent proper rendering and create misleading quality signals. XML sitemaps act as discovery maps, helping bots find important pages quickly. But outdated sitemaps, or sitemaps filled with low-value URLs, weaken trust over time.

Canonical rules deserve special attention. A clear canonicalization guide helps avoid duplicate content confusion across filtered pages, tracking parameters, or alternate URLs. Search engines rely on these signals to understand preferred versions, especially in dynamic systems.

Think of crawlability like road signs. If directions conflict, crawlers slow down. When signals align, indexing happens faster and more predictably.

Rendering, SSR Versus CSR, And SEO Implications

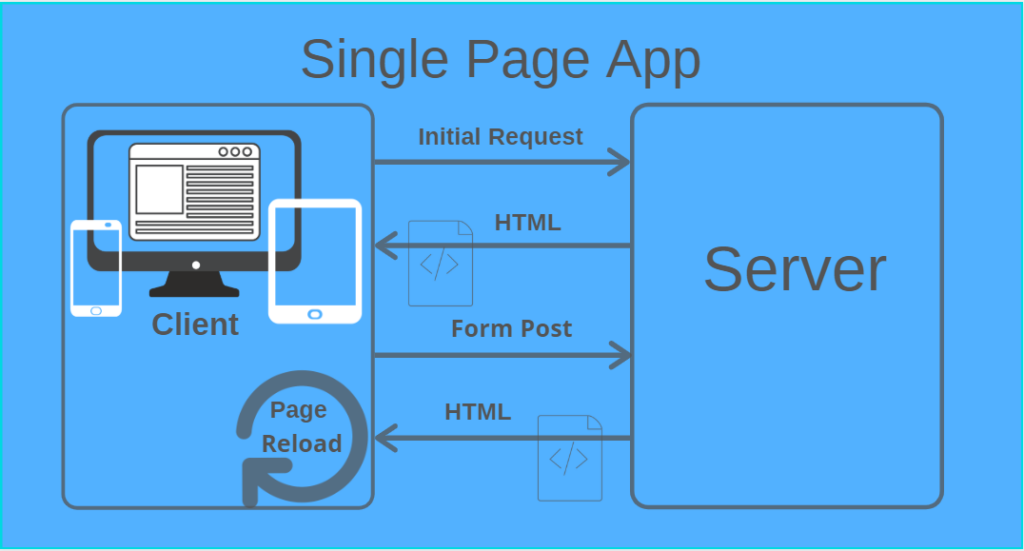

Modern frameworks introduced flexibility but also complexity. Client-side rendering often delays content visibility for crawlers, which affects indexing speed SEO outcomes. Search engines can render JavaScript, yes, but rendering consumes resources and may delay indexing.

For many projects, server-side rendering SEO approaches provide a practical balance. Pre-rendering content ensures essential HTML is available immediately, improving crawl efficiency. Hybrid rendering models often work well, too — server-render key pages while allowing dynamic interactions afterward.

Developers building SEO for single-page apps should pay attention to routing and metadata updates. Dynamic title tags and structured content must exist in rendered HTML, not only after JavaScript execution.

A common scenario: an app performs flawlessly for users but shows empty shells to bots initially. Small rendering adjustments often fix months of indexing issues.

Speed And Core Web Vitals

Performance affects both users and visibility. Slow pages lead to frustration, and search engines use performance signals to evaluate quality indirectly. Strong core web vitals developer tips often start with fundamentals: optimized images, minimized JavaScript bundles, efficient caching, and reduced layout shifts.

Developers sometimes chase micro-optimizations while ignoring bigger wins. Reducing third-party scripts or improving server response times usually delivers larger gains. This is especially relevant for technical SEO for web apps, where frameworks can add heavy client-side overhead.

Performance tuning isn’t about perfect scores. It’s about consistent, realistic improvements that keep interaction smooth. Small improvements compound across thousands of page visits.

Think practically. Faster rendering means faster crawling, better engagement, and fewer abandoned sessions — all aligned outcomes.

Indexing Signals Through Structured Data

Search engines rely on context, not just content. A strong, structured data checklist helps bots understand entities, relationships, and page purpose more clearly. Structured data isn’t a ranking trick; it’s a communication protocol.

Developers can implement a schema for products, articles, organizations, and services depending on the site type. Even simple additions clarify intent and can improve rich result eligibility.

For local or service-oriented sites, the local schema for service businesses becomes especially useful by reinforcing business details and geographic relevance. Accuracy matters more than volume here. An incorrect schema can create confusion.

Structured data should be validated during deployment workflows. Automation helps catch errors early, preventing quiet indexing issues after launches.

URL Structure And Site Hierarchy

Clean URLs help both users and crawlers understand relationships between pages. A clear hierarchy supports site architecture SEO, making authority flow naturally through internal links.

Flat structures can work for small sites, but growing platforms benefit from logical categorization. Keep URLs readable, consistent, and stable. Frequent structural changes create unnecessary redirects and dilute signals.

Avoid overcomplicated parameters when possible. Dynamic systems often generate infinite combinations that waste crawl resources. A thoughtful structure supports crawl budget best practices, ensuring bots focus on high-value content.

Think of site architecture like a library. If books are scattered randomly, discovering relevant material becomes slower for everyone.

Handling Pagination, Facets, And Thin Pages

Faceted navigation introduces serious crawl complexity. Filters generating endless URL combinations can consume crawl resources quickly. Managing these patterns responsibly supports crawl budget best practices while protecting index quality.

Pagination should preserve discoverability without creating duplicate content confusion. Canonical tags, noindex rules, or structured navigation help guide crawlers toward primary pages.

Thin pages often appear unintentionally through automated systems. Monitoring generated pages and consolidating low-value content helps maintain site quality signals.

Developers and SEO teams should collaborate early when designing filtering systems. Prevention always costs less than cleanup later.

Security And HTTPS Best Practices

Security is foundational for trust. HTTPS is non-negotiable today, but implementation details matter. Mixed content warnings, improper redirects, or expired certificates can harm usability and indirectly impact visibility.

Consistent redirects from HTTP to HTTPS help consolidate authority signals. Secure headers and clean certificate management also support long-term stability.

Search engines view secure environments as reliability signals. Developers already care about security for users; SEO benefits simply align with good engineering hygiene.

Trust isn’t built through one setting. It emerges from consistency across the entire experience.

Monitoring Logs, Indexing Reports, And Alerts

Monitoring often separates proactive teams from reactive ones. Log analysis reveals how crawlers actually interact with your site, not how you assume they do. Patterns can highlight blocked resources, crawling loops, or neglected sections.

Search Console indexing reports provide additional insight into coverage and errors. Combining these with automated alerts helps catch issues early.

A practical technical SEO for developers workflow includes regular checks after major releases. Small deployment changes sometimes alter crawl behavior unexpectedly.

Monitoring doesn’t need to be overwhelming. Even periodic reviews can prevent weeks of unnoticed indexing decline.

Deploy Considerations: Staging Vs Production Pitfalls

Deployment workflows frequently cause SEO issues. Staging environments indexed accidentally, missing redirects after migrations, or incorrect canonical tags are surprisingly common.

Clear separation between staging and production prevents indexing confusion. Noindex directives should exist on staging but be removed cleanly during launches. Automated QA checks help ensure this transition happens safely.

Teams using cloud infrastructure often benefit from AWS hosting SEO tips, such as consistent CDN configuration and predictable caching rules. Infrastructure decisions influence crawl behavior more than many expect.

Deployment checklists reduce risk. SEO problems are often deployment problems wearing a different name.

Quick Audit Template For Developers

A practical two-hour audit helps identify major issues quickly. Start by reviewing crawlability basics, robots rules, and sitemap accuracy. Then test rendering behavior to ensure essential content appears without heavy JavaScript execution.

Check performance metrics focusing on core bottlenecks rather than edge cases. Review canonical implementation and internal linking structure. Confirm HTTPS consistency and monitor indexing reports for anomalies.

A lightweight sitemap and robots best practice review alone can reveal surprising issues. Developers who run regular audits catch problems before they impact rankings significantly.

Think of this audit as maintenance, similar to checking logs or dependency updates. Routine work that prevents bigger issues later.

Conclusion

Technical SEO isn’t separate from development; it’s an extension of building reliable web experiences. Clean architecture, efficient rendering, strong indexing signals, and thoughtful deployment practices all help search engines discover and trust your content faster. Start with fundamentals, monitor consistently, and collaborate early with SEO teams. If you want faster troubleshooting, create a reusable technical audit checklist that your team can run before every major release. Small engineering decisions shape long-term visibility more than most realize.

FAQs

Why should developers care about technical SEO at all?

Developers control how search engines crawl and render websites. Technical decisions around architecture, rendering, and performance directly affect indexing speed and visibility, often more than content changes alone.

Is server-side rendering always better for SEO?

Not always, but SSR often improves initial crawlability and indexing speed. Hybrid approaches can balance performance and flexibility depending on the application’s complexity.

How often should technical SEO audits be performed?

Light audits, monthly and deeper reviews after major deployments, work well. Frequent checks help catch rendering or indexing issues before they affect search performance.

Do single-page apps always struggle with SEO?

No. Proper routing, metadata handling, and pre-rendering strategies allow SPAs to perform well in search when implemented thoughtfully.

What causes slow indexing for new pages?

Common causes include weak internal linking, crawl budget waste, rendering delays, or missing sitemap entries. Clear discovery paths typically accelerate indexing.

Can AWS hosting impact SEO performance?

Yes, indirectly. CDN setup, caching configurations, and response times influence crawl efficiency and user experience, which can affect visibility over time.